Biased AI Service Debugging: Uncovering the Hidden Biases in Your AI Models

Artificial Intelligence (AI) has revolutionized the way we live and work, from virtual assistants to self-driving cars, and from healthcare to finance. However, despite its immense potential, AI is not immune to biases and errors. In fact, AI models are often plagued by cognitive biases, which can lead to unfair outcomes, incorrect decisions, and a loss of trust in these systems. In this article, we'll delve into the world of biased AI service debugging, exploring the challenges, tools, and best practices for identifying and mitigating these hidden biases.

The Cognitive Biases in AI

AI models don't just simulate human thinking and language; they also mimic our cognitive biases. Overconfidence, confirmation bias, and anchoring bias are just a few examples of the many cognitive biases that can affect AI decision-making. These biases can be particularly problematic in areas like healthcare, finance, and law enforcement, where the consequences of an AI system's errors can be severe.

Why Biased AI Service Debugging is Essential

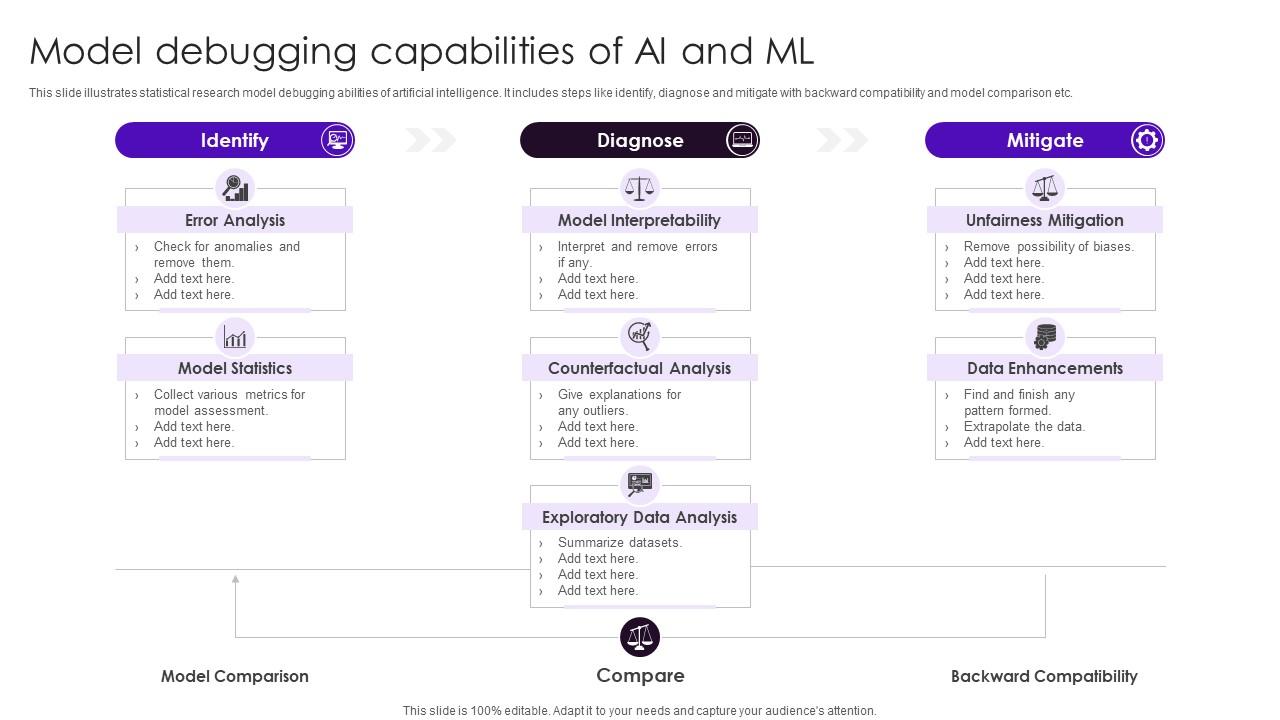

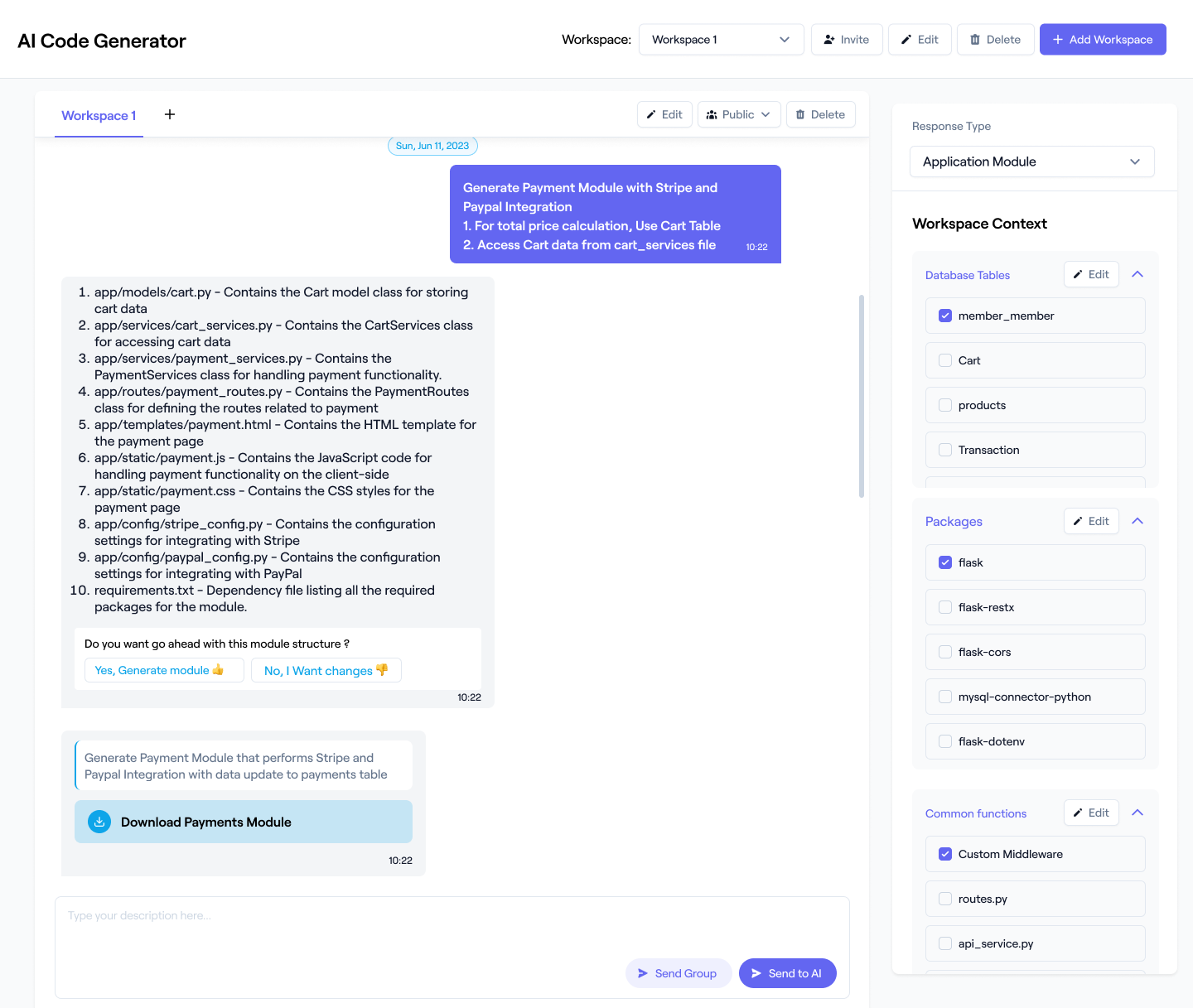

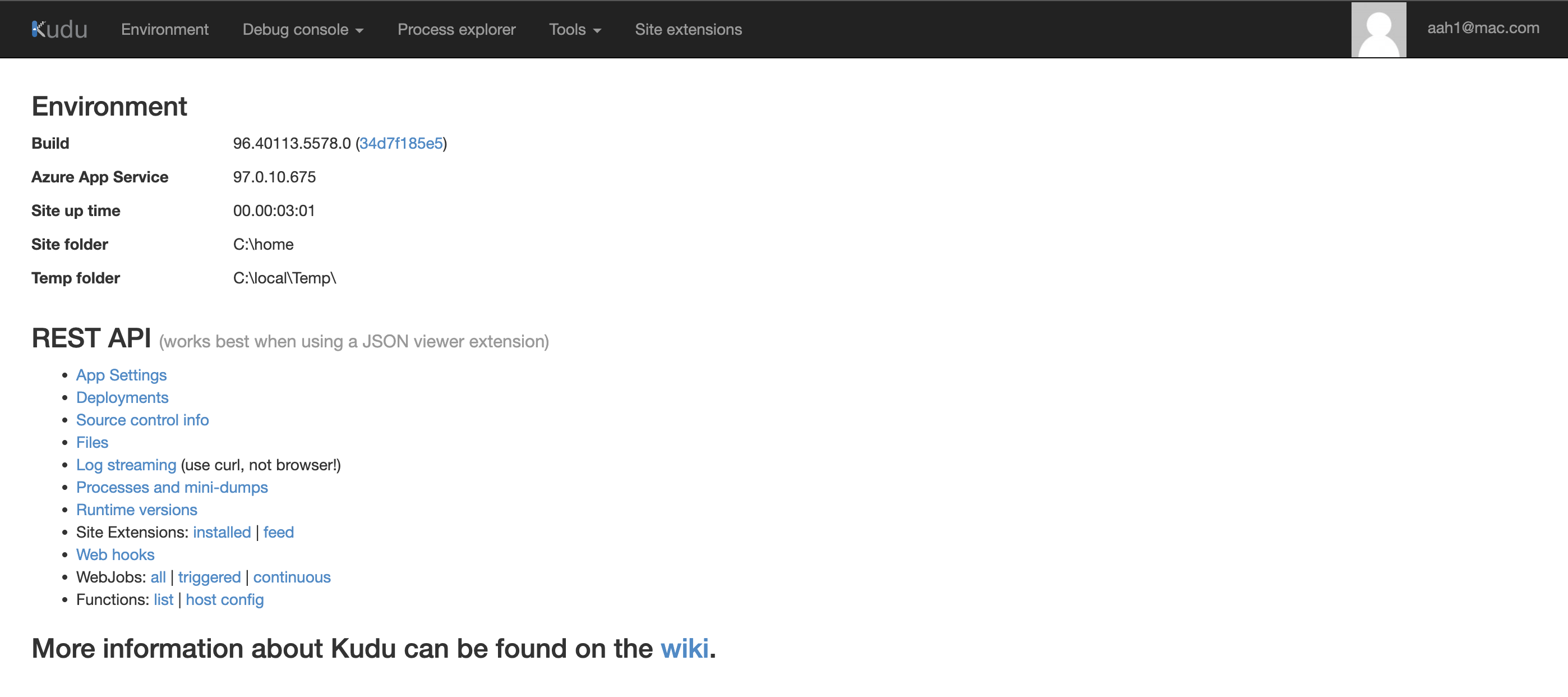

As AI agents transition from simple chatbots to complex autonomous systems, finding and fixing their errors gets harder. AgentRx is an automated diagnostic framework that pinpoints critical failures and supports more transparent, resilient agentic systems. However, even with advanced diagnostic tools, biased AI service debugging remains a significant challenge.