Unveiling the Power of Explainable AI Solution: Unlocking Transparency and Trust in AI Systems

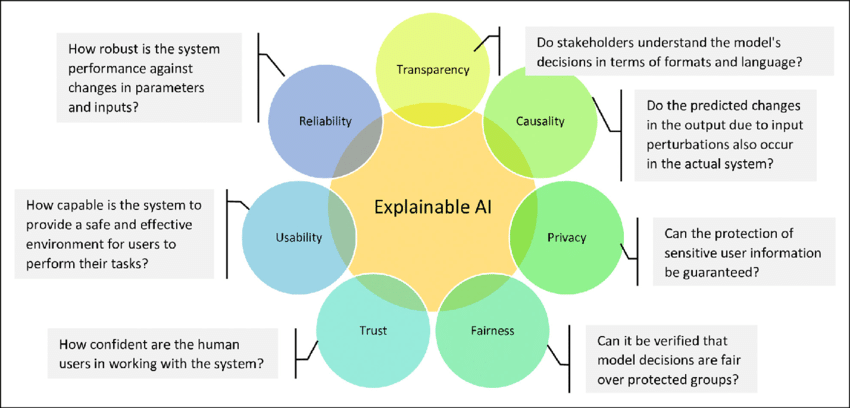

As artificial intelligence (AI) continues to transform industries and revolutionize the way we live and work, a pressing concern has emerged: the need for transparency and trust in AI systems. This is where Explainable AI Solution (XAI) comes into play, enabling human users to comprehend and trust the results and output created by machine learning algorithms.

What is Explainable AI Solution?

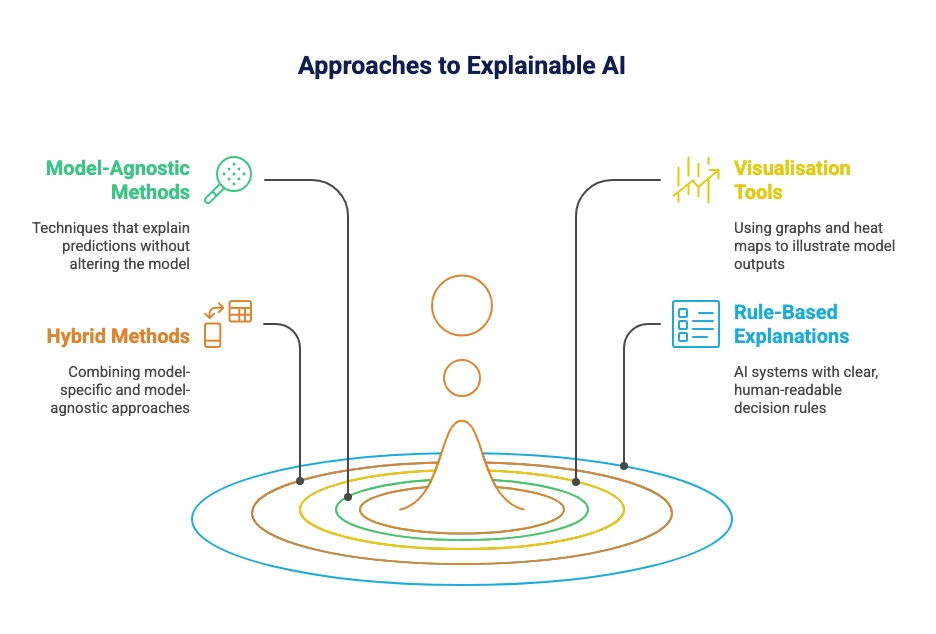

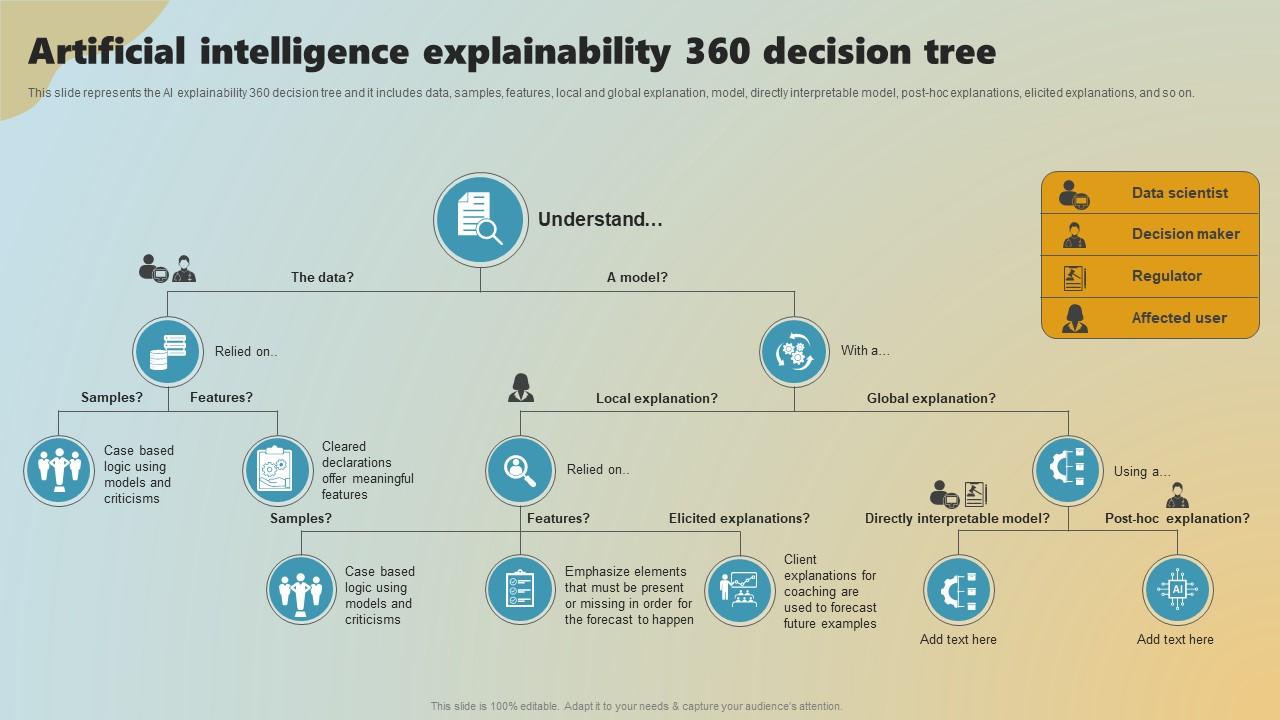

Explainable artificial intelligence (XAI) is a field of research that aims to provide a suite of machine learning techniques that enable human users to understand, appropriately trust, and produce more explainable models. By shedding light on the black box nature of machine learning algorithms, XAI promises to revolutionize the way we interact with AI systems.

The Importance of Explainable AI Solution

- Enhanced Trust: XAI fosters trust in AI systems by providing transparent and interpretable results, which is essential for building confidence in decision-making processes.

- Improved Decision-Making: By understanding the reasoning behind AI-generated output, humans can make more informed decisions, leading to better outcomes and reduced risks.

- Regulatory Compliance: XAI helps organizations comply with regulatory requirements by providing transparent explanations for AI-driven decisions, reducing the risk of bias and errors.

- Increased Efficiency: Explainable AI Solution enables humans to identify and fix errors in AI systems more efficiently, reducing the time and resources required for maintenance and updates.